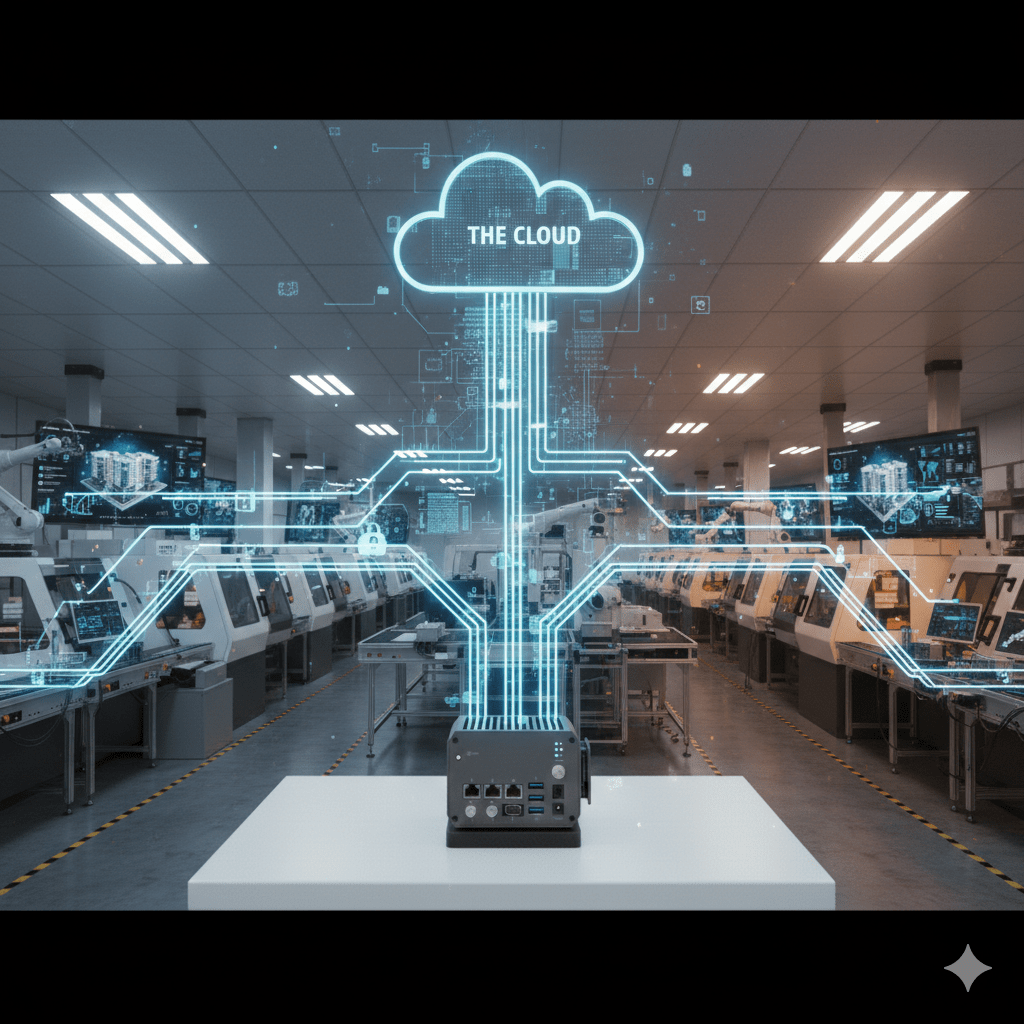

Edge Computing Gateway: Bridge Between Cloud and Factory

The digital transformation of manufacturing operations increasingly relies on cloud platforms for advanced analytics, machine learning, and enterprise-wide data visualization. However, sending all industrial data to centralized cloud servers introduces latency, consumes excessive bandwidth, and creates single points of failure that compromise operational continuity. Advantech edge computing gateways solve this challenge by processing data locally at the network edge while maintaining selective cloud connectivity, enabling manufacturers to leverage cloud capabilities without sacrificing real-time responsiveness or operational independence.

The UNO-2372G exemplifies modern edge computing architecture with Intel Core i3/i5 processors providing substantial computational resources directly on the factory floor. This platform runs containerized applications through Docker, allowing manufacturers to deploy custom analytics, quality inspection algorithms, or predictive maintenance models locally. A pharmaceutical manufacturer might run statistical process control calculations on tablet weight measurements, only alerting cloud systems when trends indicate potential issues rather than streaming every measurement. This edge processing reduces cloud data volumes by 90% while maintaining millisecond response times for local control decisions.

Containerization and Application Deployment

Traditional industrial PCs required custom operating system images, manual software installation, and complex dependency management. The UNO-2484G supports Docker containerization, packaging applications with all dependencies into portable units deployable across the entire gateway fleet. A vibration analysis container developed for one production line deploys to 50 identical lines with a single command, ensuring consistency and accelerating rollouts. Container orchestration through Kubernetes enables sophisticated deployment strategies – blue/green deployments run new application versions alongside current versions for testing, then instantly switch traffic when validation completes.

The gateways support multi-container architectures where specialized functions run in isolated environments. One container might handle MQTT communications with cloud platforms, another processes video streams from inspection cameras using OpenCV, while a third runs machine learning inference using TensorFlow Lite. Containers share data through internal networks faster than external APIs, enabling complex edge workflows. Resource limits prevent misbehaving containers from consuming all CPU or memory, maintaining operational stability even during application faults.

Local Data Processing and Analytics

Edge analytics transform raw sensor streams into actionable information before cloud transmission. The EPC-U2217 embedded PC processes vibration FFT analysis locally, identifying bearing faults through spectral analysis without uploading raw accelerometer data. Temperature trend analysis detects gradual drifts indicating failing heating elements hours before traditional threshold alarms trigger. Energy consumption calculations integrate instantaneous power measurements over time, producing hourly/daily summaries uploaded to cloud platforms rather than second-by-second readings.

Machine learning inference at the edge enables computer vision quality inspection, anomaly detection, and predictive maintenance without cloud dependencies. Pre-trained models developed in cloud environments deploy to edge gateways for real-time inference. A surface defect detector processes camera images at 30 frames per second, only uploading images with detected defects for human review. This reduces cloud storage costs by 95% while maintaining complete defect documentation. The gateways support TensorFlow Lite, ONNX Runtime, and PyTorch Mobile inference engines, providing flexibility in model formats.

Hybrid Cloud-Edge Architectures

The ADAM-3600 demonstrates hybrid operations where local control functions run independently while selective data synchronizes with cloud platforms. A water treatment plant’s chemical dosing control operates locally on the gateway, maintaining safe chlorine levels even during internet outages. Historical data, operator interventions, and system alarms upload to cloud platforms when connectivity permits, enabling remote monitoring and regulatory compliance reporting. This architecture provides autonomous operation with cloud-enhanced visibility.

Bidirectional synchronization keeps local and cloud systems aligned. Cloud-based dashboards display real-time operational status, while configuration changes in cloud management interfaces propagate to edge gateways. An operator adjusting PID controller parameters through a cloud interface sees those changes deploy to the edge gateway within seconds. This bidirectional capability enables remote commissioning, troubleshooting, and optimization without site visits, reducing service costs for geographically distributed operations.

Protocol Translation and Integration

Edge gateways function as protocol translators, accepting data from industrial devices using legacy protocols and republishing to cloud platforms using modern APIs. Modbus RTU temperature sensors, BACnet building controllers, and OPC UA manufacturing systems all connect to the same gateway. The edge processor normalizes data into JSON format, adds timestamps and quality flags, then publishes via MQTT to AWS IoT Core, Azure IoT Hub, or private cloud brokers. This translation occurs locally with sub-second latency rather than relying on cloud-based integration platforms.

The gateways support bidirectional control, receiving commands from cloud dashboards and translating them to appropriate industrial protocols. A facility manager viewing a cloud dashboard clicks a button to adjust HVAC setpoint; the gateway receives the MQTT message and writes the corresponding Modbus register to the building controller. Security policies enforce authorization – not all cloud users can issue control commands, and audit logs track all remote interventions. Rate limiting prevents accidental or malicious command flooding that might overwhelm field devices.

Data Buffering and Store-and-Forward

Network outages must not compromise operational data integrity. The UNO-2372G buffers up to 100,000 data points locally during cloud connectivity losses, automatically uploading when the connection restores. Priority queuing ensures critical alarms transmit first, followed by historical data and diagnostic information. Compression reduces upload times and data costs – JSON payloads compress 60-80% using gzip, significantly reducing cellular data consumption for remote sites.

Time-series databases running on edge gateways provide sophisticated local storage beyond simple buffers. InfluxDB or TimescaleDB containers store weeks of historical data locally, enabling local HMI trending and analytics without cloud connectivity. Periodic cloud synchronization uploads aggregated summaries rather than raw data – hourly averages, daily peaks, and monthly totals provide adequate visibility for enterprise reporting while minimizing bandwidth consumption. Full-resolution data remains at the edge for detailed local analysis when needed.

Security and Access Control

Connecting factory networks to cloud platforms creates cybersecurity concerns that edge gateways address through multiple protective layers. Physically separated network interfaces isolate production control networks from IT/cloud networks. Firewalls filter bidirectional traffic, permitting only authorized communications. VPN tunnels encrypt all cloud traffic, preventing eavesdropping on data traversing public internet connections. Certificate-based mutual authentication ensures both edge gateways and cloud endpoints verify each other’s identity before data exchange.

Container security scanning prevents deployment of vulnerable application images. Signature verification ensures containers originate from trusted registries. Runtime security monitors container behavior, alerting on suspicious activities like unauthorized network connections or privilege escalation attempts. Regular security updates address newly discovered vulnerabilities in the operating system, Docker runtime, and deployed applications, with Advantech maintaining support throughout each product’s lifecycle.

Real-World Implementation Example

A food processing facility deployed 15 UNO-2484G gateways across production lines making frozen meals. Each gateway connects to 40-60 devices including metal detectors, checkweighers, X-ray inspection systems, and label printers. Local processing validates product weights against specifications, rejecting out-of-specification units immediately. Quality data aggregates into shift reports uploading to a cloud-based quality management system. When internet connectivity failed during a storm, production continued uninterrupted with local quality enforcement. The buffered quality data uploaded automatically once connectivity restored, maintaining regulatory compliance documentation without manual intervention. The project reduced cloud data costs by 85% while improving quality response times from 5-minute batch delays to real-time rejection.

Frequently Asked Questions

What processing power do edge computing gateways provide?

Advantech edge gateways range from Intel Celeron processors (UNO-1372G) to Intel Core i3/i5/i7 processors (UNO-2372G, UNO-2484G, EPC-U2217) with 4-32GB RAM. This provides substantial computational capability for edge analytics, machine learning inference, and containerized applications while maintaining industrial reliability and power efficiency.

Can I run Windows or Linux applications on these gateways?

Yes, the gateways support both Windows 10 IoT Enterprise and various Linux distributions (Ubuntu, Debian, CentOS). Docker containerization enables running applications regardless of underlying OS. Many deployments use Linux for lower resource consumption and better container support, while Windows suits applications requiring specific Windows libraries or compatibility.

How do edge gateways handle software updates without disrupting production?

Container orchestration enables zero-downtime updates. New application versions deploy to staging containers, undergo testing, then production traffic switches seamlessly. The old version remains available for instant rollback if issues arise. Operating system updates can schedule during maintenance windows or use A/B partition schemes where updates install to inactive partitions.

What machine learning frameworks are supported?

The gateways support TensorFlow Lite, ONNX Runtime, PyTorch Mobile, and OpenVINO for running inference on pre-trained models. Model training typically occurs in cloud environments with GPUs, then compressed models deploy to edge gateways for inference. Common applications include computer vision defect detection, predictive maintenance, and anomaly detection.

How much local data storage is available?

Storage varies by model – typically 32-256GB SSD or industrial-grade flash memory. Additional storage via USB, SATA SSD, or SD cards extends capacity. For time-series data, databases like InfluxDB can store weeks to months of high-resolution data locally. Periodic cloud synchronization or manual archive retrieval provides access to older data.

Can edge gateways operate completely independently from cloud platforms?

Absolutely. Many deployments operate entirely locally with no cloud connectivity, using edge gateways purely for protocol conversion, data aggregation, and local analytics. Cloud integration is optional and can be added later as requirements evolve. The gateways provide standalone value through local processing capabilities.

What happens if the edge gateway fails?

High-availability deployments use redundant gateways with automatic failover. Configuration backups stored on SD cards or cloud storage enable rapid replacement – swap the failed gateway, restore configuration, and resume operation in 15-30 minutes. For critical applications, dual gateways in hot standby mode provide sub-second failover with no data loss.

How difficult is it to develop custom edge applications?

Difficulty varies by application complexity. Simple data processing uses visual programming tools like Node-RED included with Advantech software. More complex applications require programming skills in Python, JavaScript, or compiled languages. Advantech provides SDKs, example code, and technical support. Many system integrators specialize in edge application development.