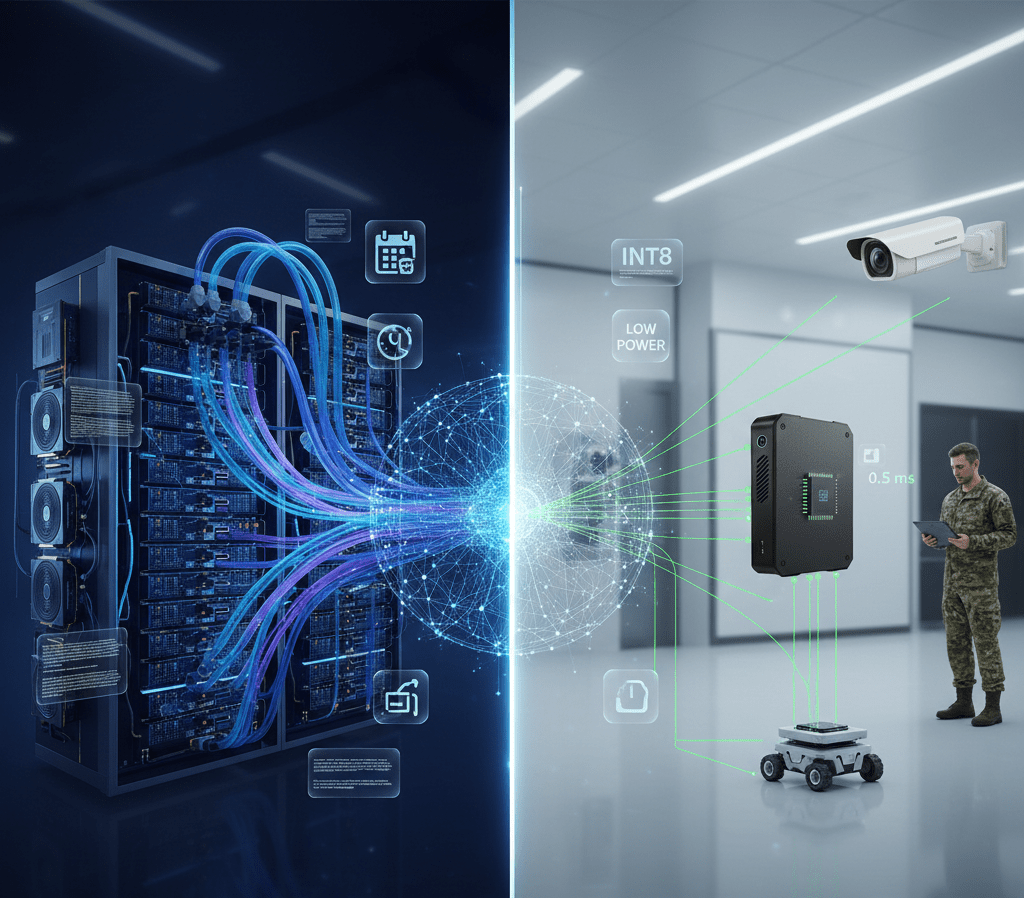

AI Model Training vs Inference Computing Requirements

AI workflows separate into distinct training and inference phases with dramatically different computational requirements. Advantech edge AI systems optimize for inference deploying trained models locally while training occurs in cloud or datacenter environments.

Training Computational Demands

Training neural networks requires processing millions of examples through billions of parameters over thousands of iterations. GPUs with large memory (16-80GB) enable batch processing. Multi-GPU configurations parallelize training across dozens of accelerators. Cloud platforms provide massive computational resources for hours or days of intensive training workloads.

Inference Optimization

Inference runs trained models on individual inputs requiring millisecond latencies. Edge devices use quantized models (INT8 vs FP32) reducing memory and computation 4x. Single-image batching prioritizes latency over throughput. Power-efficient accelerators maintain continuous operation on limited power budgets.

Hybrid Architecture Benefits

Train in cloud leveraging unlimited GPUs, dataset storage, and development tools. Deploy to edge for low-latency inference, data privacy, and offline operation. Cloud-to-edge pipelines automate model optimization, packaging, and deployment. OTA updates refresh edge models incorporating improved versions trained on accumulated production data.

Edge Training Considerations

Some applications require edge training – personalizing models to specific environments, adapting to changing conditions, or privacy-sensitive scenarios preventing cloud data transmission. Transfer learning fine-tunes pre-trained models on limited edge hardware. Federated learning aggregates improvements from multiple edge devices without centralizing data.

FAQ

Can training occur on edge devices?

Limited training possible but challenging. Full model training requires substantial memory, computation, and time impractical for most edge devices. Transfer learning fine-tuning pre-trained models proves more feasible. Most deployments train in cloud and deploy to edge for inference.

How often do edge models require updates?

Depends on application stability. Quality inspection models may operate years without updates if products/processes remain constant. Autonomous systems benefit from quarterly updates incorporating learned improvements. OTA update infrastructure enables frequent refreshes without physical access.