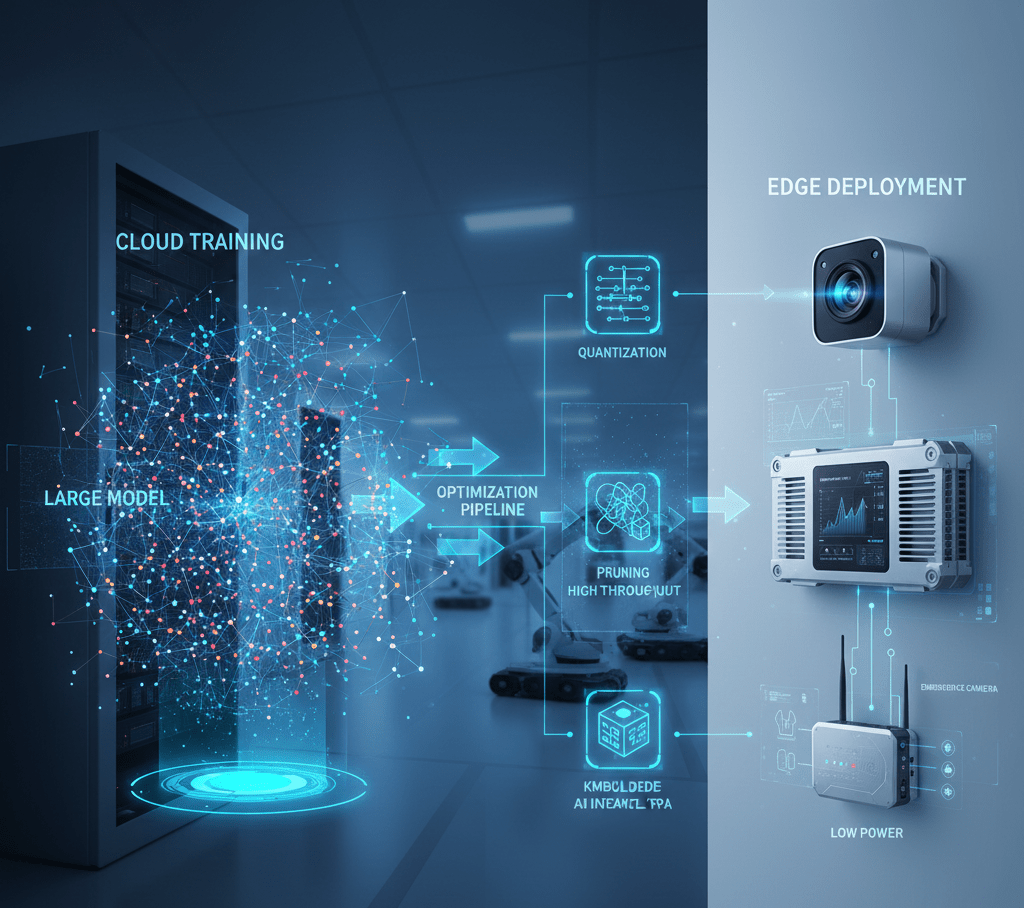

Deep Learning Model Optimization for Edge Deployment

Neural networks trained in cloud environments with unlimited computational resources prove too large and slow for edge devices. Advantech supports model optimization techniques reducing size and accelerating inference while maintaining accuracy enabling deployment on resource-constrained industrial systems.

Quantization and Precision Reduction

Training uses 32-bit floating point (FP32) for maximum accuracy. Post-training quantization converts weights to 8-bit integers (INT8) reducing model size 4x and increasing speed 2-4x with minimal accuracy loss (<1% typical). Quantization-aware training simulates quantization effects during training improving INT8 accuracy. TensorRT and OpenVINO automate quantization workflows.

Model Pruning

Structured pruning removes entire channels, filters, or layers identified as redundant through sensitivity analysis. Achieves 30-50% size reductions with 1-2% accuracy trade-offs. Unstructured pruning zeros individual weights achieving higher compression but requiring sparse computation support. Iterative pruning alternates between pruning steps and fine-tuning maintaining accuracy.

Knowledge Distillation

Large “teacher” models train compact “student” models through soft target matching. Students learn to mimic teacher outputs achieving similar accuracy in smaller architectures. Particularly effective for models requiring substantial training data; students leverage teacher knowledge without full dataset access.

Neural Architecture Search

Automated techniques discover efficient architectures for specific tasks and hardware. MobileNet, EfficientNet, and SqueezeNet families provide pre-optimized architectures balancing accuracy and efficiency. Hardware-aware NAS considers target device constraints during architecture search.

FAQ

How much optimization is possible without accuracy loss?

Typical optimizations achieve 4-10x speedup with <2% accuracy reduction through quantization and pruning. Aggressive optimization reaching 20-50x speedup may sacrifice 5-10% accuracy. Requirements vary by application – some tolerate accuracy trade-offs for performance while others prioritize accuracy.

Which optimization techniques should I apply?

Start with quantization (easy, significant benefits). Add pruning if further reduction needed. Consider knowledge distillation for complex models or limited training data. Layer fusion and graph optimization (automatic in frameworks) provide additional speedup. Evaluate techniques iteratively measuring accuracy impact.