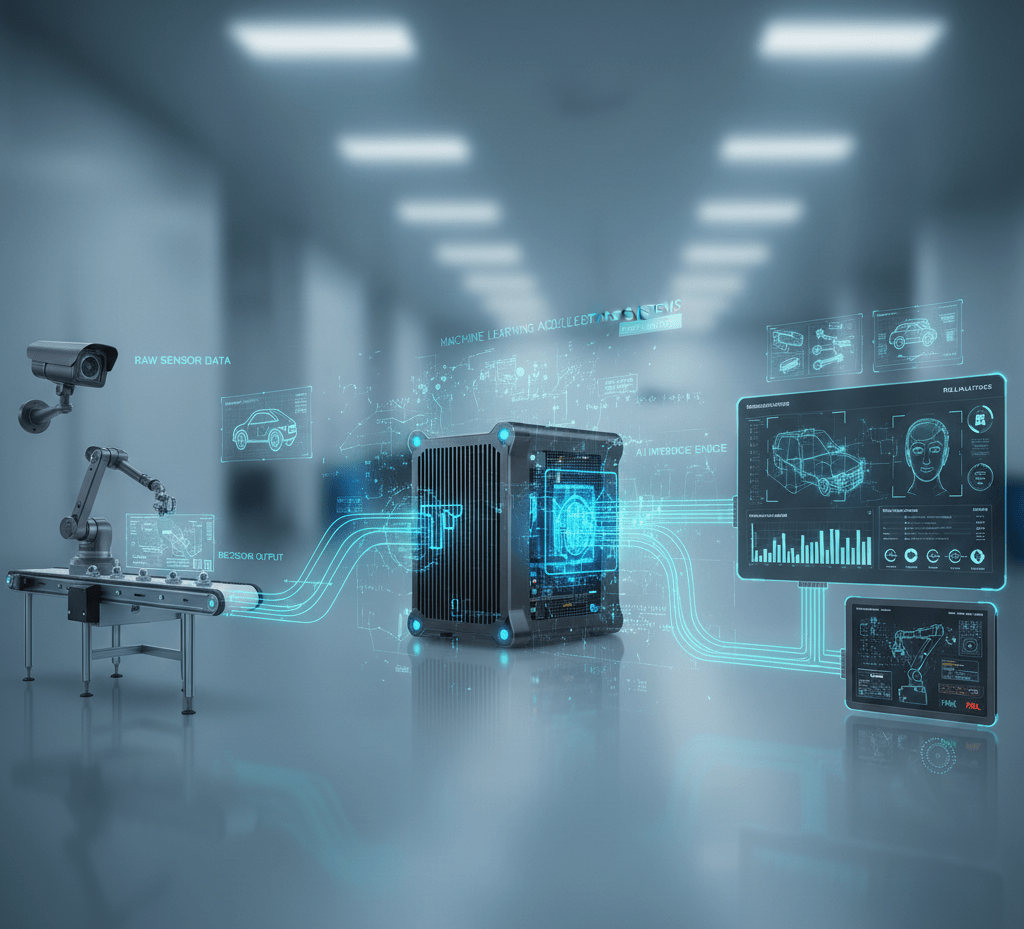

Machine Learning Inference Accelerator Hardware Systems

CPU-only inference proves inadequate for real-time AI applications requiring millisecond latencies and high throughput. Advantech systems integrate specialized AI accelerators delivering 10-100x inference speedup while reducing power consumption and enabling sophisticated model deployment.

NVIDIA GPU Acceleration

NVIDIA GPUs provide massive parallel processing ideal for neural networks. Jetson embedded modules integrate GPU, CPU, and memory in compact SoMs consuming 5-30W. Discrete Tesla and RTX GPUs offer higher performance for edge servers. Tensor cores accelerate mixed-precision operations common in inference workloads.

Intel VPU Solutions

Intel Movidius VPUs (Vision Processing Units) optimize computer vision workloads with specialized hardware for image preprocessing, feature extraction, and neural network inference. Low power consumption (1-5W) suits battery-powered and embedded applications. OpenVINO toolkit optimizes models for VPU deployment supporting TensorFlow, PyTorch, and ONNX frameworks.

Google Coral Edge TPU

Edge TPU ASICs accelerate TensorFlow Lite models achieving 100+ FPS on standard image classification networks while consuming under 2W. USB and M.2 modules add AI acceleration to existing systems. Lower cost than GPUs suits high-volume deployments where TensorFlow Lite models meet requirements.

Comparison and Selection

NVIDIA GPUs provide maximum flexibility supporting all frameworks and custom models. Intel VPUs optimize computer vision with lower power consumption. Coral TPUs offer best power efficiency for TensorFlow Lite models. Selection depends on framework requirements, power budgets, and performance needs.

FAQ

Which accelerator should I choose?

For maximum flexibility and performance: NVIDIA GPUs. For power-constrained computer vision: Intel VPUs. For cost-sensitive TensorFlow deployments: Coral TPUs. Evaluate based on model framework, power budget, performance requirements, and deployment scale. POCs with different accelerators validate selection.

Can multiple accelerators work together?

Yes, some applications benefit from heterogeneous acceleration. For example, VPUs handle image preprocessing while GPUs run inference, or multiple accelerators process parallel streams. Software frameworks like DeepStream and OpenVINO support multi-accelerator configurations.