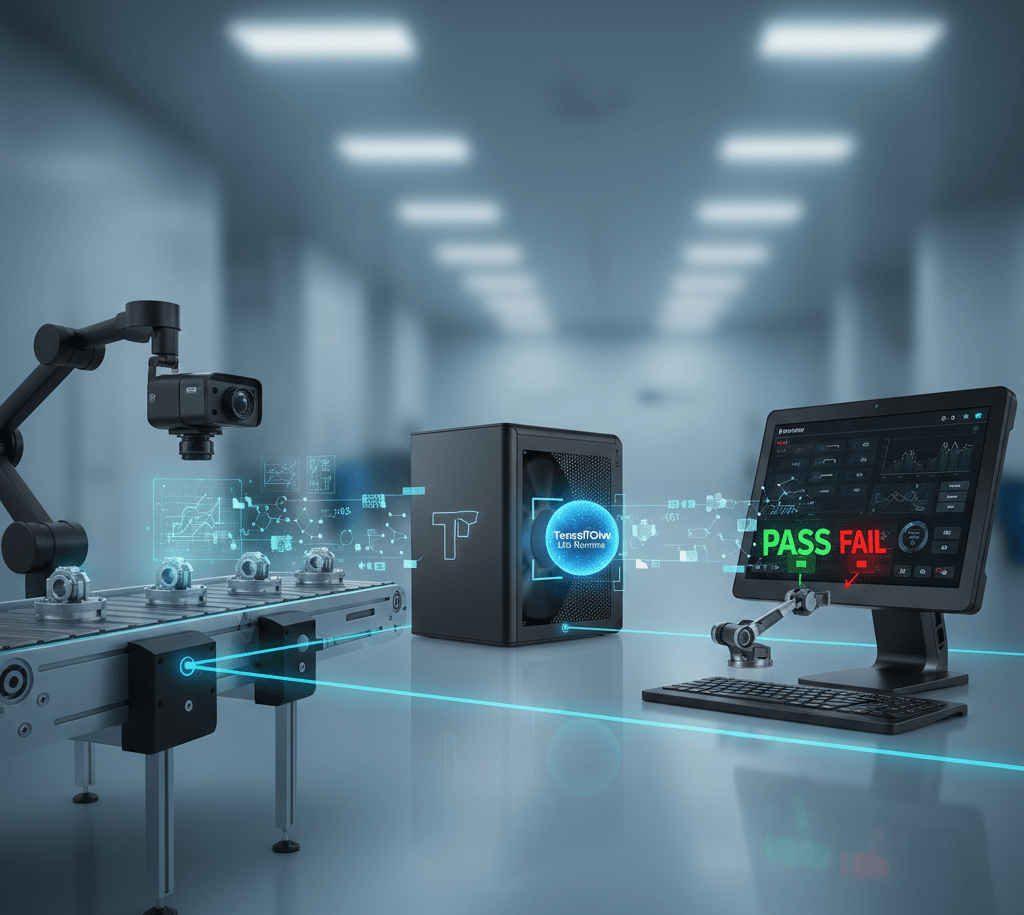

TensorFlow Lite Edge Inference Platform Solutions

TensorFlow dominates machine learning development through comprehensive tools and extensive community support. TensorFlow Lite brings these capabilities to resource-constrained edge devices enabling deployment of trained models on industrial embedded systems. Advantech edge platforms support TensorFlow Lite inference delivering AI capabilities without cloud dependencies.

Model Conversion and Optimization

TensorFlow Lite Converter transforms trained TensorFlow models into efficient formats for edge deployment. Quantization reduces model size 4x by converting 32-bit floating point weights to 8-bit integers with minimal accuracy loss. Operator fusion combines multiple operations reducing computational overhead. These optimizations enable sophisticated models on CPU-only embedded systems without GPU requirements.

Cross-Platform Compatibility

TensorFlow Lite runs on ARM, x86, and various microcontroller architectures providing deployment flexibility. Python and C++ APIs enable integration with existing industrial software. Pre-built operator libraries accelerate common neural network operations. Custom operator support accommodates specialized model architectures.

Edge TPU Acceleration

Google Coral Edge TPU coprocessors dramatically accelerate TensorFlow Lite inference, achieving 100+ inferences per second on MobileNet models while consuming under 2W. USB and M.2 form factors integrate with Advantech industrial computers adding AI acceleration without custom hardware development.

FAQ

How does TensorFlow Lite compare to full TensorFlow?

TensorFlow Lite optimizes for inference (running trained models) rather than training. Smaller runtime, lower memory usage, and optimized operators suit edge deployment. Full TensorFlow remains necessary for model training on servers/cloud before converting to TensorFlow Lite for edge deployment.

Can existing TensorFlow models deploy to TensorFlow Lite?

Most models convert successfully though some operations lack TensorFlow Lite implementations. Converter identifies unsupported operations allowing retraining with compatible alternatives. Standard architectures (MobileNet, ResNet, EfficientNet) convert reliably.