NVIDIA GPU Edge AI Computer Systems

Artificial intelligence transforms industrial operations through computer vision quality inspection, predictive maintenance, process optimization, and autonomous systems. Training deep learning models requires massive cloud GPU resources, but deploying those models for real-time inference demands edge computing bringing AI capabilities directly to production environments. Advantech NVIDIA GPU-powered edge AI computers deliver the computational performance necessary for running sophisticated neural networks locally, enabling millisecond-latency decisions without cloud dependencies.

NVIDIA dominates AI acceleration through GPUs originally designed for graphics rendering but exceptionally well-suited for the parallel matrix operations underlying neural network inference. CUDA (Compute Unified Device Architecture) programming framework and cuDNN (CUDA Deep Neural Network) libraries optimize deep learning performance on NVIDIA hardware. TensorRT inference optimizer converts trained models to optimized formats achieving 10-40x speedup compared to CPU execution. This ecosystem maturity makes NVIDIA the de facto standard for edge AI deployments.

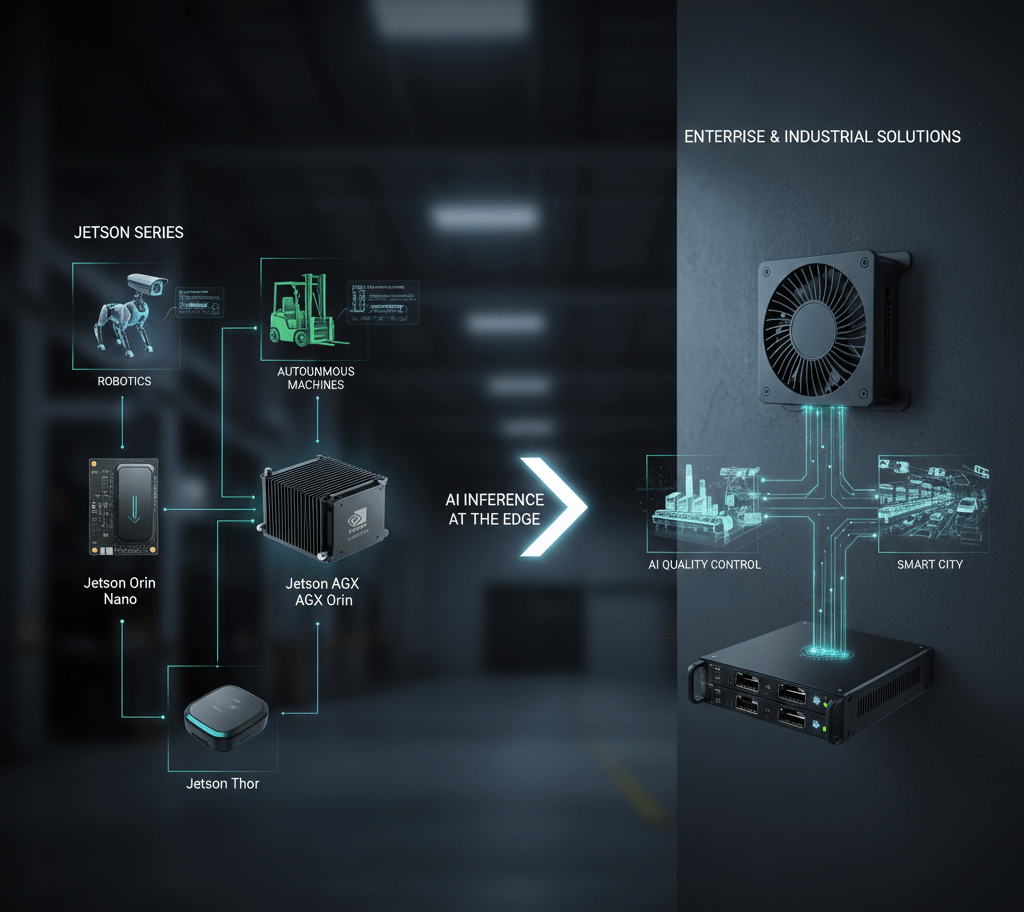

NVIDIA Jetson Embedded AI Platforms

The Jetson family specifically targets embedded and edge AI applications, offering complete systems-on-module combining GPU, CPU, memory, and AI acceleration in compact form factors consuming 5-30 watts. Jetson Nano provides entry-level 472 GFLOPS performance suitable for single camera inference applications at under 10W power consumption. Jetson Xavier NX delivers 21 TOPS (trillion operations per second) AI performance handling multiple camera streams simultaneously. Jetson AGX Xavier provides up to 32 TOPS for demanding applications like autonomous mobile robots requiring sensor fusion from cameras, LIDAR, and radar.

Advantech carrier boards integrate Jetson modules with industrial I/O, rugged enclosures, and extended temperature operation transforming development modules into deployable industrial systems. Industrial Ethernet connectivity, isolated digital I/O, serial ports, and CAN bus interfaces enable integration with manufacturing equipment, robotics, and automation systems. Extended temperature operation from -20°C to 60°C and conformal coating suit factory floor deployments experiencing temperature swings, humidity, and contamination.

NVIDIA Tesla and RTX GPU Accelerators

High-performance edge AI applications requiring processing of dozens of camera streams, complex model ensembles, or real-time video analytics benefit from discrete GPU accelerators. NVIDIA T4 Tensor Core GPUs deliver 130 TOPS INT8 performance in 75W power envelopes suitable for edge servers. RTX A2000 provides ray tracing capabilities alongside AI inference for applications combining 3D visualization with machine learning. These accelerators plug into PCIe slots in industrial computers, providing upgrade paths as AI models grow more sophisticated.

Multi-GPU configurations scale performance for demanding applications. Autonomous vehicle perception systems might employ four cameras per vehicle side requiring parallel processing across multiple GPUs. Quality inspection systems simultaneously analyzing products from multiple production lines distribute inference workloads across GPU arrays. NVLink high-speed interconnects enable GPU-to-GPU communication faster than PCIe, benefiting model parallelism where single neural networks span multiple GPUs.

Computer Vision and Image Processing

Manufacturing quality inspection represents the most prevalent edge AI application, detecting defects human inspectors miss while maintaining 100% inspection rates impossible with sampling. Convolutional neural networks trained on thousands of defect examples identify scratches, dents, missing components, incorrect assembly, and dimensional variations. Edge AI computers process camera images at line speeds – 60+ frames per second – classifying each product as pass/fail and logging defect images for analysis.

Optical character recognition (OCR) verifies serial numbers, lot codes, and expiration dates on products and packaging. Traditional template-matching OCR fails with font variations, printing defects, or perspective distortions. Deep learning OCR handles these variations, reading text from any angle, lighting condition, or print quality. Applications include pharmaceutical serialization compliance, automotive part traceability, and food packaging verification.

Object Detection and Tracking

Warehouse automation employs computer vision for inventory management, identifying products on shelves and pallets without barcode scanning. Object detection networks locate and classify items, updating inventory systems in real-time. Forklift safety systems detect pedestrians and obstacles, triggering warnings or automatic braking preventing collisions. These systems process video at 30 fps, tracking multiple objects simultaneously while maintaining sub-50ms latency for safety-critical decisions.

Retail analytics track customer movements through stores, measuring dwell times at displays, traffic patterns between departments, and queue lengths at checkouts. Heatmaps visualize customer behavior informing store layout optimization. Privacy-preserving implementations detect and track people without facial recognition, maintaining customer anonymity while generating actionable insights.

Predictive Maintenance and Anomaly Detection

Equipment failures cause expensive unplanned downtime and potential safety hazards. Predictive maintenance AI models analyze sensor data – vibration, temperature, current, acoustic emissions – predicting failures hours or days before occurrence. Edge AI computers process sensor streams continuously, comparing current signatures against learned normal behavior patterns. Deviations trigger maintenance alerts enabling proactive repairs during planned downtime rather than emergency responses.

Anomaly detection neural networks learn normal equipment operation without requiring labeled failure examples, addressing the challenge that failure data is scarce in well-maintained operations. Autoencoders reconstruct normal operation patterns; reconstruction errors indicate anomalies. One-class SVMs and isolation forests identify outliers in multi-dimensional sensor spaces. These unsupervised approaches detect novel failure modes never seen during training.

Natural Language Processing at the Edge

Voice-controlled industrial equipment enables hands-free operation in environments where workers wear gloves, safety equipment, or handle materials preventing touchscreen interaction. Edge AI computers run speech recognition models locally, converting voice commands to equipment control signals without cloud connectivity. Applications include warehouse picking systems where workers verbally confirm picked items, manufacturing assembly guidance providing voice instructions, and maintenance procedures offering hands-free access to technical documentation.

Language translation assists international operations where multilingual workforces benefit from real-time translation of safety instructions, work procedures, and communications. Edge deployment maintains privacy as sensitive business communications never leave premises. Offline operation ensures continued functionality during network outages inevitable in industrial environments.

Model Optimization and Inference Performance

Neural networks trained in cloud environments using FP32 (32-bit floating point) precision achieve maximum accuracy but require substantial computational resources. TensorRT optimization converts models to INT8 (8-bit integer) precision, reducing memory requirements 4x and increasing inference speed 2-4x with minimal accuracy loss (<1% typical). Quantization-aware training during model development further improves INT8 accuracy by simulating quantization effects.

Model pruning removes redundant neural network weights identified through sensitivity analysis. Structured pruning eliminates entire channels or layers, achieving 30-50% size reductions with 1-2% accuracy trade-offs. Knowledge distillation trains compact “student” models mimicking larger “teacher” models, capturing essential decision-making capabilities in smaller architectures suitable for edge deployment. These optimizations enable deploying sophisticated models on resource-constrained edge hardware.

Real-World Deployment Example

An automotive component manufacturer deployed Advantech edge AI computers with Jetson Xavier NX modules at 12 quality inspection stations across three production lines manufacturing fuel injectors. Each station captures images of injectors from four angles using synchronized industrial cameras. Convolutional neural networks detect surface defects, dimensional variations, and assembly errors at 100% inspection rates versus 10% sampling with human inspectors. The system identified 15% more defects than previous sampling methods while reducing inspection labor costs by 60%. False positive rates under 2% maintain production flow without excessive rejections. Edge processing eliminates network bandwidth requirements for transmitting high-resolution images to central servers.

Frequently Asked Questions

What makes NVIDIA GPUs suitable for edge AI?

NVIDIA GPUs provide massive parallel processing capabilities ideal for neural network inference. Tensor cores accelerate matrix operations underlying deep learning. Mature software ecosystem (CUDA, cuDNN, TensorRT) optimizes AI workloads. Jetson embedded platforms integrate GPU, CPU, and AI accelerators in compact, power-efficient packages suitable for edge deployment.

How much AI performance do different applications require?

Requirements vary widely. Single camera quality inspection might need 5-10 TOPS. Multi-camera surveillance requires 20-30 TOPS. Autonomous mobile robots with sensor fusion demand 50+ TOPS. Applications involving large models, high-resolution images, or real-time video need more performance. Start with workload analysis determining FPS requirements and model complexity.

Can edge AI systems operate without internet connectivity?

Yes, edge AI specifically enables offline operation. Models deploy to edge computers running inference locally without cloud dependencies. This provides low latency, data privacy, and continued operation during network outages. Cloud connectivity remains useful for model updates, remote monitoring, and aggregating insights across multiple edge deployments.

What frameworks and tools support NVIDIA edge AI development?

TensorFlow, PyTorch, ONNX, and other major frameworks support NVIDIA hardware. NVIDIA DeepStream SDK optimizes video analytics pipelines. TAO Toolkit simplifies transfer learning and model optimization. Triton Inference Server manages model deployment. JetPack SDK provides complete development environment for Jetson platforms.

How difficult is deploying AI models to edge devices?

Complexity varies. Pre-trained models from NVIDIA NGC catalog or model zoos deploy quickly with minimal customization. Custom models require training data collection, model training (often in cloud), optimization via TensorRT, and integration with application logic. Advantech provides reference designs and support accelerating deployment timelines.

What about power consumption for edge AI?

Jetson Nano consumes 5-10W suitable for battery-powered applications. Jetson Xavier NX uses 10-20W for moderate performance. High-end edge servers with discrete GPUs consume 100-300W. Select platforms balancing performance requirements against power budgets and thermal constraints of deployment environments.

Can existing cameras work with AI inference systems?

Most industrial cameras with Ethernet (GigE Vision) or USB3 interfaces work with AI systems. Higher resolution cameras (5MP+) provide more detail for defect detection but require more processing power. Frame rates, lighting, and lens selection significantly impact AI accuracy. Camera selection should align with AI model requirements.

How do I get started with edge AI for my application?

Start with feasibility assessment: collect sample images/data, evaluate whether AI approaches show promise, estimate required performance. Develop proof-of-concept using development kits or cloud resources. Once validated, deploy optimized models to industrial edge AI systems. Partner with system integrators or AI consultants for complex applications requiring custom model development.